Seedance 2.0 Character Consistency: A Full Workflow Breakdown.

Getting Seedance 2.0 to hold a character across shots takes more than a good prompt. Here's the full multi-step workflow.

Why Seedance 2.0 Loses Characters Between Shots

Seedance 2.0 launched in early 2026 with a specific promise: no more character drift. ByteDance's Dual-Branch Diffusion Transformer architecture separates identity data from motion data, and the vendor claims significantly improved stability for faces, clothing, and visual style across frames. In controlled single-shot tests, that claim largely holds. Across a multi-shot sequence, it does not hold cleanly, and understanding why is the first step to fixing it.

There are two distinct failure modes, and they look identical on screen. The first is natural model drift: the model's attention to your reference assets degrades over a sequence. Independent tests document this as "attention fatigue" by shots four or five, where features such as a nose ring, a specific jacket silhouette, or an exact hair shade erode gradually. The second is filter-triggered drift: facial and character descriptors activate a soft substitution response, where the model delivers an output but quietly replaces certain features with a safer interpretation. No error message. No block. Just a different character wearing your character's clothes.

The fix for each is different. Natural drift requires keyframe re-injection and seed-locking techniques. Filter-triggered drift requires prompt reframing before generation runs. Applying the wrong fix wastes both credits and time, which is why distinguishing them matters before you build your workflow.

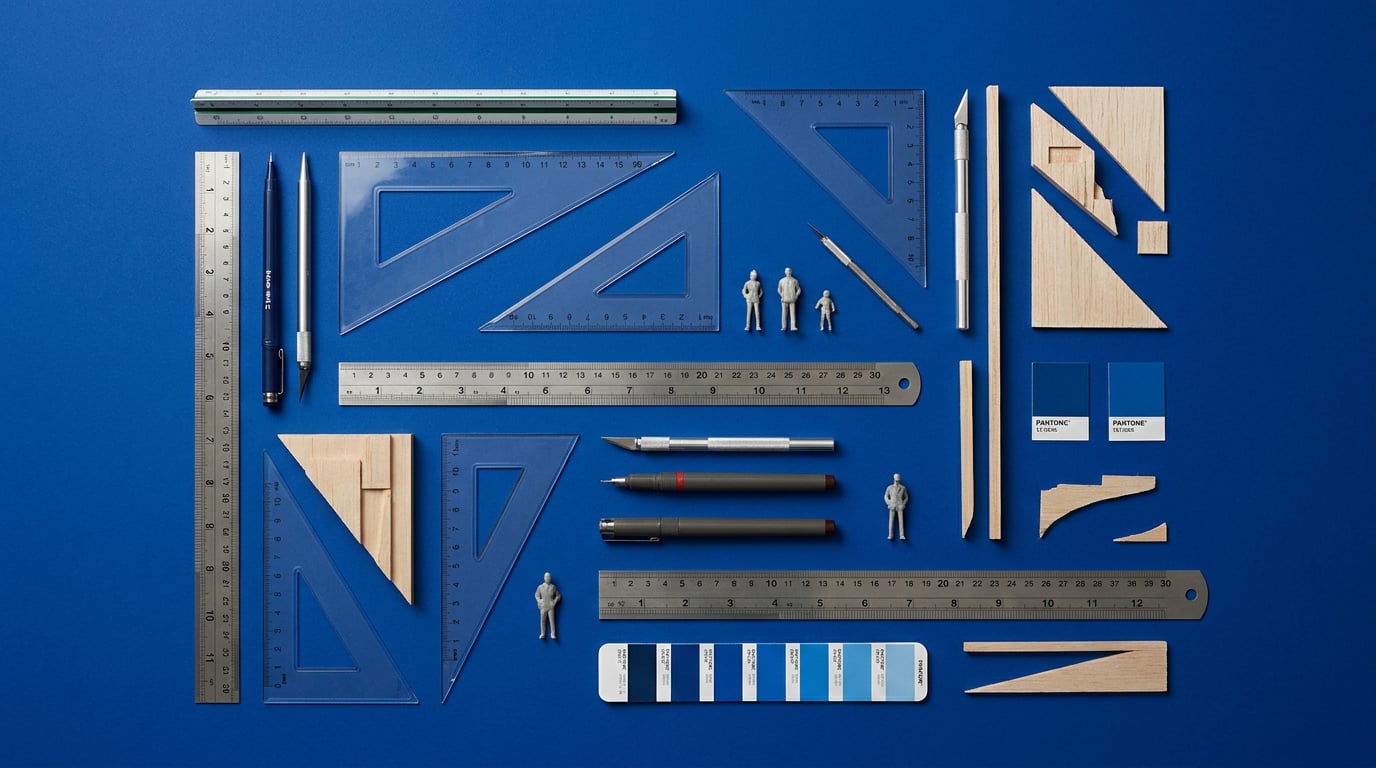

Reference images compound the problem when used naively. A single uploaded image gives Seedance 2.0 a two-dimensional read on your character. Without a multi-angle canonical sheet covering front, profile, and three-quarter views against neutral lighting, the model is constructing a three-dimensional representation from incomplete data. That reconstruction drifts. For a product designer producing a brand mascot for a campaign, or a game developer scripting an NPC through a battle cinematic, or a content creator building a recurring presenter avatar across six social videos, the result is the same: inconsistency that erodes audience recognition and multiplies re-generation costs.

What consistency failure looks like in practice is worth naming directly. Hair colour shifts mid-sequence. Costume details change between cuts: lapels appear or disappear, sleeve length varies. Facial proportions drift subtly, so the character reads as a different person by the fifth shot even though individual frames look plausible. These are not catastrophic failures that are easy to catch. They are incremental substitutions that pass a quick review and surface only in the edit.

Filter-triggered drift and natural model drift require different fixes. Identifying which one you are dealing with before you regenerate is the single biggest efficiency gain in a Seedance 2.0 workflow.

Building a Character Bible Before You Touch Generate

The most effective consistency fix happens before any generation runs. A character bible is not a mood board or a loose collection of references. It is a structured specification document that enumerates every visual attribute in a fixed order, so the model receives the same anchor in every prompt, across every session, from every team member.

Stensyl's Write surface is the right place to build this. Using Claude Sonnet 4.6 or GPT-5.5 from the model picker, you can draft a spec that locks attributes in plain language rather than vague adjectives. Claude Sonnet 4.6 is available from the Pro tier; GPT-5.5 from Starter. Both handle structured enumeration well. The format matters: list attributes in the same sequence every time. Body proportion and silhouette first, then costume from outer layer to detail, then colour palette with specific descriptors, then hair, then facial structure. Face comes last in the spec, which mirrors the prompt architecture that performs best in generation (covered in the next section).

A character spec for a marketing team's brand mascot might read: "Compact, broad-shouldered build. Wears a single-breasted structured coat in cobalt blue, four visible brass buttons, no collar. Wide-leg trousers in off-white. Hair: close-cropped, dark brown, no texture variation. Skin tone: medium warm, no marks. Eyes: deep-set, dark hazel." Every attribute enumerated. No "fashionable" or "striking" or "distinctive", which mean nothing to the model and shift across sessions.

Once drafted, the spec lives in a Stensyl Project. The Project workspace is shared, so every team member pulls from the same source document rather than paraphrasing from memory. Paraphrase is where slow drift starts. A game development studio building a recurring NPC across six cutscenes cannot afford one animator describing "dark auburn hair" and another describing "reddish-brown hair" in the same sequence. The model treats these as different inputs.

Before committing to a description style, use Stensyl's Research surface to pull reference imagery and terminology. Searching for specific costume styles, colour names, or facial structure vocabulary before writing the spec ensures you are using terms that carry consistent weight in generative model prompting rather than terms that map loosely to several possible outputs.

The final step is pinning the spec to a Moodboard in the same Project. The Moodboard carries the canonical multi-angle character sheet: front, profile, three-quarter, all in neutral lighting with high-contrast features visible. This is the visual anchor the whole team returns to. When a prompt drifts in week three of a campaign, comparing the output against the Moodboard reveals which attribute has eroded before anyone wastes credits on regeneration.

A character bible stored in a Stensyl Project with a linked Moodboard reduces prompt variation across sessions more reliably than any generation technique alone.

Prompt Architecture That Survives the Filter

The order of descriptors in your prompt affects which model behaviours activate. Front-loading facial detail is the most common mistake in character-led generation work. Facial descriptors, particularly those that are specific or unconventional, are more likely to trigger soft filter substitutions. The model reads the prompt sequentially, and a dense face description early in the prompt primes a substitution response before the rest of the scene context has registered.

The prompt structure that holds up across disciplines follows this sequence: body proportion and silhouette, then costume and colour palette, then scene context, then facial description last and briefly. This is not about hiding the character from the model. It is about giving the model enough non-face context to anchor the generation before it processes facial attributes, which lowers the substitution rate.

The bracketing technique builds on this. Instead of generating a character as the subject of a scene, frame the character as a prop within a scene. "A cobalt-blue-coated figure stands at the edge of a product launch stage, crowd behind, spotlight above" reads differently to the model's filter logic than "A person with [specific features] stands on a stage." The first prompt describes a scene that contains a figure. The second describes a figure. For an automotive designer briefing a brand character for a launch film, this distinction is the difference between a consistent output and a substituted one. For a game developer scripting an NPC cutscene, the same framing turns a potential filter trigger into a scene description the model handles cleanly.

Negative prompts in Seedance 2.0 serve a specific function here. Use them to block the model's most common substitution defaults: generic face shapes, default hair colours, standard costume silhouettes that differ from your spec. Keep the negative prompt anchored to your character's actual spec deviations rather than generic quality terms. "No blonde hair, no round face, no rolled lapels" is more effective than "no distortion, no artefacts."

Before committing credits to a generation run, use Ray on Stensyl to sense-check your prompt structure. Ray is Stensyl's creative-decision assistant, built to help you pick the right model and approach for a given task. Feed it your prompt and ask it to flag descriptor patterns that may conflict with filter logic. Ray operates on a fast Anthropic Haiku model, so the response is near-instant and costs nothing additional. For a motion design studio running a six-spot campaign, catching a filter-risky prompt before generation runs across all six shots saves a material number of credits.

Discipline framing matters in the prompt itself. A marketing team running a brand mascot through a product spot will write scene context differently than a game developer scripting a battle cinematic. The marketing prompt carries commercial scene language: stages, products, brand environments. The game prompt carries spatial and action language: terrain, enemies, camera cuts. Both work with the bracketing technique, but the scene context that anchors the character differs, and the model responds to that context. Write the scene you actually need, not a generic placeholder.

Chaining Shots in Film to Hold Consistency Across Scenes

Seedance 2.0's multi-shot workflow is where consistency problems compound most aggressively. Each new shot in a sequence is a new generation event. Without explicit anchoring at every handoff point, the model's attention to your character spec resets or degrades. Stensyl's Film surface organises multi-scene projects, and those scene boundaries are exactly the points where intervention is required.

The seed-locking workflow starts in Generate, not Film. Generate a reference frame first: a single four-second clip using your full character spec prompt and a fixed seed. This is the "DNA check" that confirms the model has resolved your character correctly before you invest in a full sequence. Lock the seed. Screenshot the output frame. That frame becomes the visual anchor imported into Film as the reference for every subsequent scene.

Scene-to-scene prompt inheritance is the key discipline in Film. Do not rewrite the character description for each scene. Copy the character block exactly and extend it with scene-specific variables: location, lighting, action, camera angle. The model reads the character description as a continuous thread when it is verbatim repeated, rather than paraphrased. Paraphrase introduces variation the model interprets as a different character. An exhibition design team building a brand character for a multi-video stand experience, running six scenes across three installations, should maintain the character block as a locked string that is copied and pasted, never retyped.

Use Storyboards to map every scene that carries the character before generation runs. Stensyl's Storyboards surface lets you pre-map shot sequence, character presence, and camera logic. This serves two functions: it identifies which scenes require the character block (and which do not), and it surfaces scenes likely to cause drift early, when fixing them costs planning time rather than credits. A motion design studio running a campaign with multiple character-led spots can pre-identify the handoff points most vulnerable to attention fatigue and schedule keyframe re-injection at those points.

On concurrent generation limits: Pro tier allows two concurrent generations, and Studio allows four. This matters when iterating on a reference frame in Generate while a scene renders in Film. At Pro, you can run the DNA check clip and a scene generation simultaneously. At Starter or Lite, with one concurrent generation, you sequence them. Build this into your timeline if you are on a lower tier, because the reference frame must be confirmed before dependent scenes run, not after.

A shot ledger is not optional for sequences longer than three scenes. Document every scene by shot ID, seed number, and exact character block text. A simple structure in a shared document is enough. When drift appears in shot six, the ledger tells you whether the character block was copied correctly or whether a paraphrase crept in. It also tells you which seed to reuse when you regenerate the failing scene, keeping it consistent with the established sequence rather than introducing a new visual interpretation.

Copy the character block exactly for every scene. Paraphrase is not a minor variation — the model reads it as a different character, and consistency fails from that point forward in the sequence.

Using Canvas to Automate Consistency Checks

Manual frame-by-frame review is feasible for a three-scene sequence. It does not scale to a campaign with six character-led spots or an exhibition installation with twelve video outputs. Canvas, Stensyl's node-based workflow editor, handles the automation through a combination of LLM Chat nodes and Image Generate nodes working in sequence.

The base workflow pipes each generated frame through an LLM Chat node configured for structured consistency auditing. The node receives two inputs: the generated frame (or a description of it, extracted in a prior node) and the character spec stored in Write. The audit prompt asks the model to compare specific attributes in a fixed order, matching the order in the character spec itself. The output should be structured: attribute name, expected value, observed value, pass or fail. A wall of text is not actionable. A table with pass/fail per attribute is.

For practical prompt construction in the audit node, specificity matters. "Compare the character's hair colour to the spec. Expected: close-cropped dark brown. State observed hair colour and flag any deviation." Run this for each enumerable attribute: hair, costume layer by layer, colour palette, proportion. Claude Sonnet 4.6 handles this structured comparison reliably. GPT-5.5 is an effective alternative at Starter tier. Both are available in the Canvas LLM Chat node under the same model picker available in Write.

Chaining Image Generate nodes in Canvas means that when the audit flags a failing scene, you regenerate only that scene rather than the full sequence. The Canvas structure keeps passing scenes untouched. For a motion design studio running a six-spot campaign, this means a single failing shot in spot four does not trigger a full re-run of spots one through three. For an exhibition design team building a brand character across a multi-video stand experience, it means a proportional drift catch in video nine does not pull the earlier eight back into the queue.

The Canvas loop is efficient, but it has a ceiling. When drift occurs at the sub-frame level, such as smearing on motion transitions or flickering at scene cuts, LLM-based audit nodes cannot catch it. Those are temporal artefacts that require visual inspection. That is the exit signal from the Canvas loop. Move to Stensyl's Editing surface for frame-level fixes. Editing is a desktop-only surface, so route the failing frames there for targeted correction rather than re-running generation.

What to Do When the Filter Still Blocks You

Some prompts will not clear Seedance 2.0's filter regardless of the techniques above. Knowing how to triage the block quickly, and when to stop trying, is as important as the consistency workflow itself.

The first distinction is hard block versus soft substitution. A hard block delivers no output, or a clearly broken one. A soft substitution delivers a plausible output where the character has been quietly redesigned: a different face shape, a different hair colour, a costume that was never in the spec. The fix is different for each. A hard block needs prompt reframing, usually reducing specificity on the triggering descriptor. A soft substitution needs seed and anchor adjustment, not prompt simplification, because the model has already accepted the prompt and generated its own interpretation.

For stylised characters in game development and motion design, reframing the character as a "style guide" or "visual reference" within the prompt often clears both types of filter activation. The model responds differently to "in the style of [described character]" than to "[described character] appears in the scene." The distinction signals to the model that you are specifying an aesthetic, not generating a specific person, which is a lower-risk classification.

Stensyl's Graphics surface offers another route. Generate a style-sheet still of the character in Graphics: a clean, flat visual reference showing the character in neutral pose against a solid background. Import this into Film or Generate as a visual reference image. A visual reference carries information that text description approximates. For a graphic designer producing a brand character for a campaign, a Graphics-generated style sheet is also a client-deliverable asset in its own right. The route to filter-safe generation and the route to a clean reference image are the same step.

There is a ceiling on what prompt engineering achieves. Some character types, particularly those with highly specific or unusual facial features, will not clear the filter at any prompt configuration. Recognising that ceiling by the third attempt, rather than the fifteenth, saves credits and project time. The pattern of soft substitutions across multiple attempts, where the same features are consistently replaced with the same alternatives, is a reliable signal that you have reached it.

When a working prompt stack is confirmed, document it in the Project notes in Stensyl. The notes sit within the same Project that holds the character spec, the Moodboard, and the shot ledger. The next brief that uses this character does not start from scratch. It starts from a proven stack with a known seed, a confirmed character block, and a documented list of the filter interactions that required reframing. That accumulated knowledge is the compounding return on every hour spent on the first project.

| Discipline | Character Use Case | Key Workflow Priority |

|---|---|---|

| Marketing and Advertising | Brand mascot across a product campaign series | Anchor palette and costume before face; seed-lock per spot |

| Game Development | Recurring NPC through battle cinematics | Three-angle canonical sheet; keyframe re-injection at shot four |

| Content and Social | Presenter avatar across six or more videos | Multimodal reference slots; Canvas audit loop for temporal fixes |

| Motion Design | Character-led campaign spots | Prompt templates from Film; reference video for camera replication |

| Automotive Design | Brand character in a product launch film | Scene-bracket as "driver prop" to clear filter on styled figures |

| Exhibition Design | Brand character across multi-video stand experience | Shot ledger with seed log; Canvas chain to regenerate only failing scenes |

Seedance 2.0 is a capable model for character consistency work when the workflow is built around its actual failure points, not its marketing claims. The Dual-Branch DiT architecture delivers real stability within a single shot. The multi-shot workflow is where discipline is required: a structured character bible, a seed-locked reference frame, a verbatim character block carried across every scene, and a Canvas audit loop that catches drift before it compounds. Build those four elements before generation starts, and the model's tendency to substitute becomes a manageable variable rather than a constant surprise.

Keep reading.

Try Stensyl for yourself

Image, video, 3D, chat, and document drafting. Every AI model, one studio. Plans from £10/month.