Character Consistency in Seedance 2.0: A Full Workflow Guide.

Seedance 2.0's content filter is only half the challenge. Here's the full workflow for locking a character across scenes, disciplines, and generations.

Why Character Consistency Breaks (and Where in the Pipeline)

Passing the content filter is only the first gate. Most character drift in Seedance 2.0 happens further downstream, at keyframe transitions, lighting changes, and scene cuts, even when the prompt that triggered the generation was syntactically clean.

Understanding why requires a brief look at what Seedance 2.0 actually does differently. The model uses a "Reference-First" Dual-Branch Diffusion Transformer architecture that separates identity data (face geometry, clothing construction) from motion data. Rather than reconstructing the character from scratch on every generation, it treats the character as a persistent asset threaded through the latent space. That separation is what gives Seedance 2.0 its headline consistency advantage over models that reconstruct from text alone.

But the architecture does not eliminate drift. It relocates it. Three failure patterns appear consistently across disciplines and workflows:

The Three Drift Patterns

- Feature erosion. Distinctive markers, nose rings, facial scars, logo embroidery on a jacket, gradually disappear as generation length increases. By frame 40 of a longer sequence, the model has deprioritised the detail in favour of ambient scene coherence. For a game development team maintaining armour detail across cutscenes, this is the most common complaint: the pauldron texture holds through shot two, softens in shot three, and is essentially gone by shot four.

- Identity softening at lighting transitions. When a scene environment shifts from neutral to high-contrast or dramatic lighting, the model recalibrates its understanding of the character's geometry. A product mascot that holds a clean facial structure across five daylight social campaign frames can lose cheekbone definition the moment a night-scene prompt is introduced. The identity anchor de-prioritises structure in favour of rendering the new light correctly.

- Pose rigidity breaking silhouette. When a neutral prompt is reinterpreted across shots, mirrored hands, reversed gaze direction, and altered stance proportions can all appear. A film storyboard protagonist drawn in a three-quarter left-facing pose in act one may be subtly right-facing by act two, breaking the visual grammar the director intended.

The root cause across all three is the same: minor prompt variation signals the model to rebuild rather than recall. Even synonym substitution, writing "cobalt jacket" in one scene and "blue coat" in the next, can trigger partial identity re-generation because the model is pattern-matching against language tokens, not locking to a stored description.

Drift is not primarily a filter problem. It is a prompt variation problem. Standardise your language before you standardise anything else.

Building a Character Reference Package Before You Generate

The single most effective intervention in a character consistency workflow is one that happens before any generation runs: writing a complete, standardised character brief.

Stensyl's Write studio is the right place to build this. Using a capable writing model, GPT-5.5 on Starter tier or Claude Sonnet 4.6 on Pro, produces a more disciplined output than a text file drafted outside the platform, because the model can be prompted to enforce structural constraints: fixed colour codes rather than qualitative descriptors, specific proportions rather than vague adjectives, and an explicit list of banned synonyms that your team agrees never to use in a generation prompt.

What the Reference Package Should Contain

- Physical anchors. Hex codes or Pantone references for skin tone, hair, and eye colour. Proportional notes (height ratio, build category). Any distinctive permanent features, birthmarks, scars, piercings, written in the exact phrasing that will appear in every generation prompt.

- Costume inventory. Each garment named, coloured, and textured precisely. A marketing team building a brand mascot for a barista character should list the apron colour, logo placement, sleeve length, and collar style as fixed parameters, not as impressions.

- Banned descriptor list. Every synonym for a core physical feature that the team agrees to never use. If "scarlet hoodie" is the anchor, "red sweatshirt", "crimson top", and "ruby pullover" are all banned.

Once the written brief exists in Write, export it as a working document and pin it to the Stensyl Project. Every collaborator who generates from that character generates from the same source. This is particularly important for exhibition design teams running a guide character across a six-panel digital installation, where five different people may be generating individual panels across two weeks.

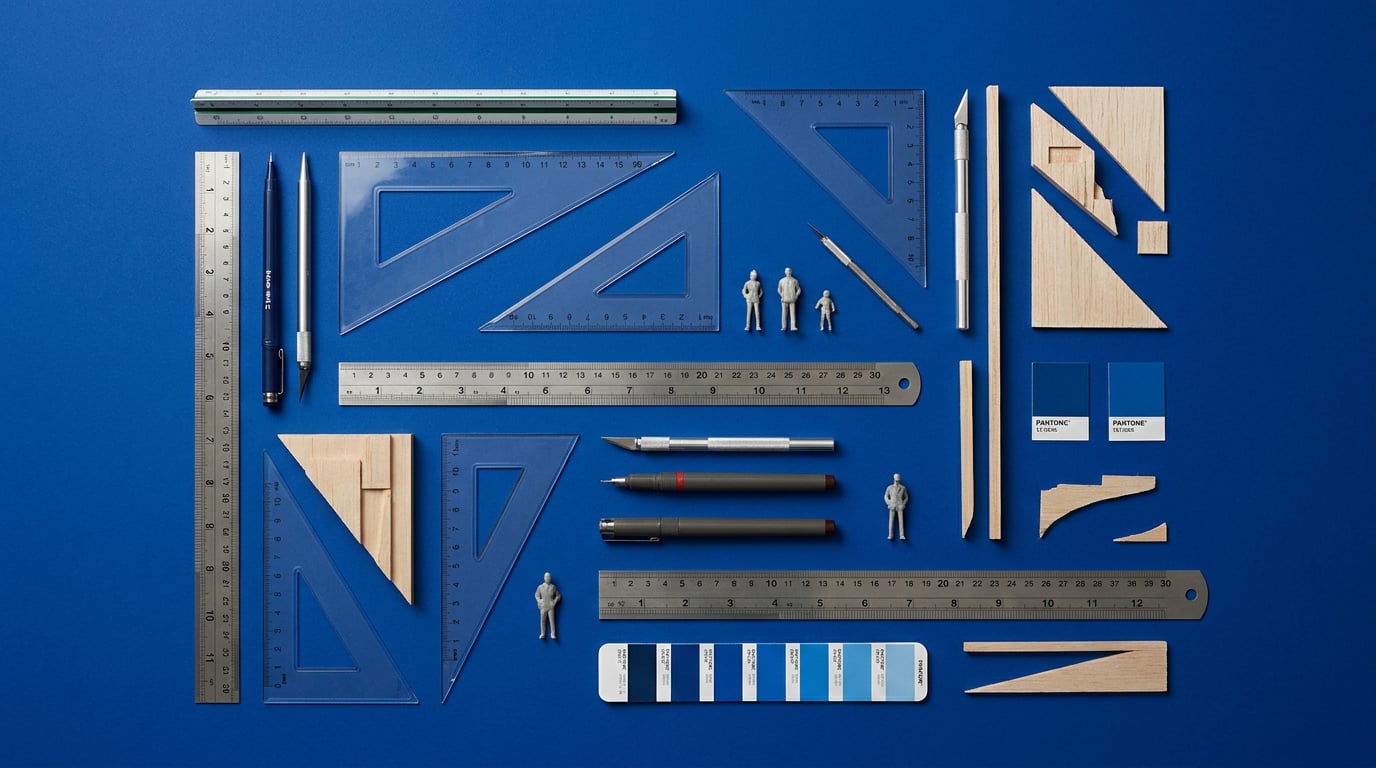

Building the Visual Reference in Moodboards

Stensyl's Moodboards surface is the complement to the written brief. Assemble a three-angle character sheet, front, profile, and three-quarter view, in neutral lighting with high-contrast markers visible. The visual and written references should agree exactly: if the brief says the character has silver-framed glasses, the moodboard image should show them.

The structure of the moodboard adapts by discipline. A game developer building a cinematic protagonist needs silhouette rigidity documented (the outline should read identically from front and side). A marketing and advertising team building a brand mascot needs the facial structure isolated against a white background so no ambient colour bleeds into the identity reference. A film and set designer assembling a performer look-book needs the hair colour documented in both warm and cool lighting to understand how it will shift across different scene environments.

A moodboard is not decoration. It is a pre-generation constraint system. Build it before you open Generate, not after your first drift failure.

Using Storyboards and Film to Chain Scenes Without Losing the Character

Sequential scene generation is where most character consistency failures compound. Each new scene is an opportunity for the model to reinterpret the character slightly, and without structural planning, those small reinterpretations accumulate into a character that looks noticeably different by the fifth shot than they did in the first.

Storyboards as a Credit-Saving Gate

Stensyl's Storyboards surface allows you to plan the full shot sequence before generating any video. This is more than a planning convenience: it is a consistency tool. When you can see the entire sequence mapped as still frames, you can identify where the lighting changes, where the camera angle shifts, and where the character's costume will need to be explicitly re-anchored in the prompt. A motion designer building a character-led title sequence across eight shots can flag shot five, the one with the dramatic backlight, as a consistency risk before spending credits on it.

Board every scene. Annotate which ones represent identity risk. Only then open Film or Canvas.

Film as a Multi-Scene Studio

Stensyl's Film surface is designed for exactly this workflow: multi-scene cinematic video where parameters persist across clips. Carry the identity block from your written brief as a locked scene parameter in Film, so you are not rewriting the character description for each new clip. The scene variables, location, lighting condition, action, change per clip. The identity block does not.

The logic is the same for a content creator producing a five-scene social video as it is for a motion designer building a cinematic title sequence. The output format differs: the social creator needs vertical 9:16 clips suitable for carousel or Reels; the motion designer needs sequences that will be composited in a downstream tool. But in both cases, the character prompt is a fixed parameter, not a per-scene decision.

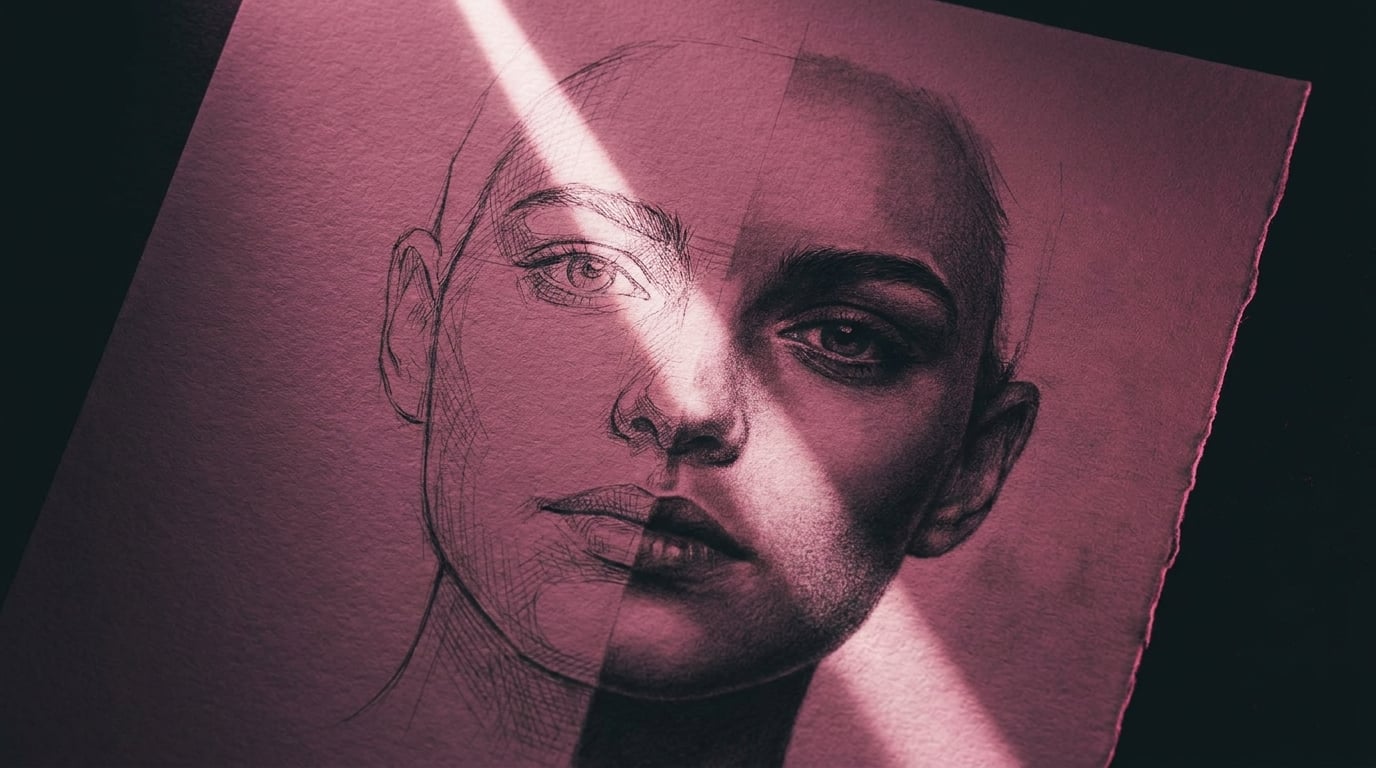

Canvas: Wiring a Visual Anchor Into Every Generation

For workflows that need more precise control, Stensyl's Canvas node-based editor provides a structural solution to drift. Use an Image Generate node to produce a clean, neutral reference still of your character, front-facing, flat lighting, no background detail. Wire that image node into the Video node as the first frame anchor for each subsequent scene generation.

This is more reliable than text-only prompting because the model is receiving a visual identity input, not just a linguistic description of one. The first frame acts as a geometric anchor: the face proportions, the costume construction, and the colour values are all present as pixels rather than as language tokens subject to synonym drift. For an automotive designer rendering a mascot figure across different showroom lighting environments, wiring the reference frame into each scene variation in Canvas is the most credit-efficient way to maintain consistency without regenerating the character from scratch each time.

Generating a clean reference still in Generate before entering Film or Canvas is the single most effective step for reducing character drift across multi-scene workflows. Text-only prompting cannot replace a visual anchor.

Prompt Architecture That Holds Across Filter and Drift

A drift-resistant prompt has a specific structure. The identity block comes first. Scene variables come last. Nothing between them introduces synonyms for physical features already defined in the identity block.

In practice, this means your prompt opens with the @Character tag and a compact recitation of the canonical physical anchors from your written brief: exact hair colour in the phrasing you locked in Write, exact clothing descriptor, exact distinguishing feature. Then the scene information: location, time of day, camera angle, action. The character does not change between scenes. The scene changes around the character.

Using Ray to Check Before Committing Credits

Before running a generation, use Stensyl's Ray assistant to sense-check whether the prompt structure is likely to cause problems. Ray is locked to a fast Anthropic Haiku model and is designed for exactly this kind of rapid creative decision support. Ask it directly: does this prompt introduce any synonym variation from the previous scene? Does the lighting descriptor create ambiguity about the character's physical features? Ray cannot see inside Seedance 2.0's processing, but it can flag structural issues in the prompt language before you commit credits to a generation that is likely to drift.

Lighting and Background as Consistency Variables

Neutral or consistent environments reduce model ambiguity about who the character is. When the scene background is complex or dramatically lit, the model has more competing visual information to balance against the identity anchor. A graphic designer locking a brand character across three social carousel frames should specify a plain or minimally textured background for at least the first frame in the sequence, establishing the character clearly before introducing environmental variation.

For an exhibition designer keeping a guide character consistent across a six-panel digital installation, the background treatment should remain within the same colour temperature and luminance range across all panels. Dramatic shifts in ambient light, a warm gallery scene followed by a cool exterior scene, are where identity softening most frequently occurs.

When the Prompt Passes but the Output Still Drifts

If a prompt clears the filter and the generation still shows drift, the correction workflow is straightforward: pull the best frame from the drifted output, the frame where the character looks most correct, save it as a reference image, and feed it back into the next generation as a first-frame anchor in Canvas. Do not rewrite the prompt. Do not introduce new descriptors to compensate for the drift. The language is not the problem at this point. A visual re-anchor is.

This iterative anchoring process resets the identity baseline without requiring a full regeneration from the written brief. It is faster, cheaper, and produces more consistent results than attempting to describe the drift correction in text.

Credit-Efficient Iteration: Getting Consistency Without Burning Your Plan

A realistic character consistency workflow across five scenes consumes more credits than a single-scene generation. Accounting for reference stills, test generations, and iterative corrections, a contained social campaign with one character across five scenes typically runs to between 15 and 25 generation attempts before it is production-ready. A multi-scene cinematic sequence with lighting variation and camera movement runs higher.

Matching Tier to Project Scale

| Project Type | Recommended Tier | Credits Available | Rationale |

|---|---|---|---|

| Social campaign, 3-5 scenes, one character | Starter (£22/mo) | 2,500 | Sufficient for reference generation, test runs, and final outputs with room for corrections |

| Multi-scene cinematic sequence, 6-10 shots | Pro (£42/mo) | 6,000 | Accommodates higher iteration count, lighting variation testing, and Canvas batch workflows |

| Ongoing brand mascot content, multiple campaigns | Studio (£84/mo) | 12,500 | Supports parallel project work and 4 concurrent generations for team efficiency |

The Storyboards stage is your primary credit-saving mechanism. Boarding the full sequence before generating means you enter Film or Canvas knowing exactly which scenes are high-risk for drift and which are straightforward. You do not waste credits discovering mid-workflow that shot six has a lighting transition that requires a different anchoring strategy.

Batching in Canvas

Canvas allows you to wire a single character reference frame into multiple scene variation nodes within the same workflow. Rather than generating the character reference fresh for each scene, you generate it once and route it into every Video node in the sequence. This is meaningfully more credit-efficient than running each scene independently, and it enforces visual consistency by design: every scene receives the same first-frame input.

Editing for Frame-Level Corrections

Stensyl's Editing surface (desktop only) provides frame-level correction capability after generation. When a character's output shows minor drift in one or two frames but the rest of the clip is usable, Editing allows targeted fixes without re-running the full video generation. This is the right tool for small feature erosion issues, a logo on a jacket that faded slightly, or an eye colour that shifted two frames before the cut.

Knowing When to Stop

A social asset destined for Instagram Stories at 1080 x 1920 has a lower consistency threshold than a broadcast deliverable being reviewed at full resolution on a calibrated monitor. Minor nose ring visibility variance across a five-frame carousel is imperceptible to a social audience scrolling at speed. The same variance in a 4K exhibition installation at 3 metres will be visible. Set your consistency threshold based on the delivery format before you start iterating, and resist the pull to keep regenerating once you have crossed it.

Handoff: Keeping the Character Consistent Beyond Stensyl

A character consistency workflow that lives only in one person's session history is not a workflow. It is a one-time achievement that cannot be reproduced by a colleague, a freelancer, or your future self three months from now.

Storing the Character Package Inside a Project

Stensyl's Projects surface is the canonical home for everything the character consistency workflow produced: the written brief exported from Write, the moodboard assembled in Moodboards, the reference still generated in Generate, and the canonical prompt with its locked identity block. Pin all of these to a single Project so that any team member opening the Project has everything they need to generate from the same character without asking anyone.

Include the shot ledger, the spreadsheet tracking which seed was used for which scene, in the Project alongside the creative assets. Regenerating a specific shot weeks later requires knowing the exact seed that produced the original; without it, you are starting from scratch on a scene the client has already approved.

Graphics for Static Character Assets

Stensyl's Graphics surface allows you to generate static character assets, brand mascots, UI avatars, exhibition guide figures, that share the same visual DNA as the video-generated character. A graphic designer who has locked a mascot character in Film for a social campaign can use the same canonical reference and prompt structure in Graphics to produce a static version for print collateral, banner ads, or a web landing page, maintaining continuity across formats without the visual disconnect that comes from generating the static and video versions independently.

The Character Consistency Workflow: Final Checklist

- Write: Draft the canonical character brief with physical anchors, costume inventory, and banned descriptor list. Export and pin to the Project.

- Moodboards: Assemble the three-angle visual reference sheet. Match it exactly to the written brief. Pin to the Project.

- Storyboards: Board the full shot sequence. Annotate identity-risk scenes before generating anything.

- Generate: Produce a clean, neutral reference still. This becomes the visual anchor for all subsequent scene generations.

- Film or Canvas: Generate the scene sequence. In Canvas, wire the reference still into each Video node. In Film, carry the identity block as a locked parameter.

- Editing: Apply frame-level corrections for minor drift. Desktop only.

- Graphics: Produce any static versions of the character from the same reference package.

- Project: Store the brief, moodboard, reference still, canonical prompt, and shot ledger together. Make the Project accessible to every team member who will touch the character.

The whole bundle saved to the Project is what makes the character reproducible. Seedance 2.0's DiT architecture gives you the technical foundation for consistency. The reference package, the shot plan, and the locked prompt structure are what make it survive a handoff.

```Keep reading.

Try Stensyl for yourself

Image, video, 3D, chat, and document drafting. Every AI model, one studio. Plans from £10/month.